Gravitas LMS

A full-stack learning management system built to showcase production-ready AI grading. Next.js frontend, PostgreSQL on Supabase, and microservices for LLM-based feedback, all wired together under a single authenticated platform.

Overview

Final-year capstone project, built alongside the automated grading research at the University of Nottingham Malaysia. Gravitas is a learning management system with enough depth to be a credible platform, not just a demo wrapper for the automated grading subsystems.

What it does

- Role-based access for admins, teachers, and students, with Row-Level Security enforced at the PostgreSQL layer so a student cannot touch their own grade row regardless of what the application layer does.

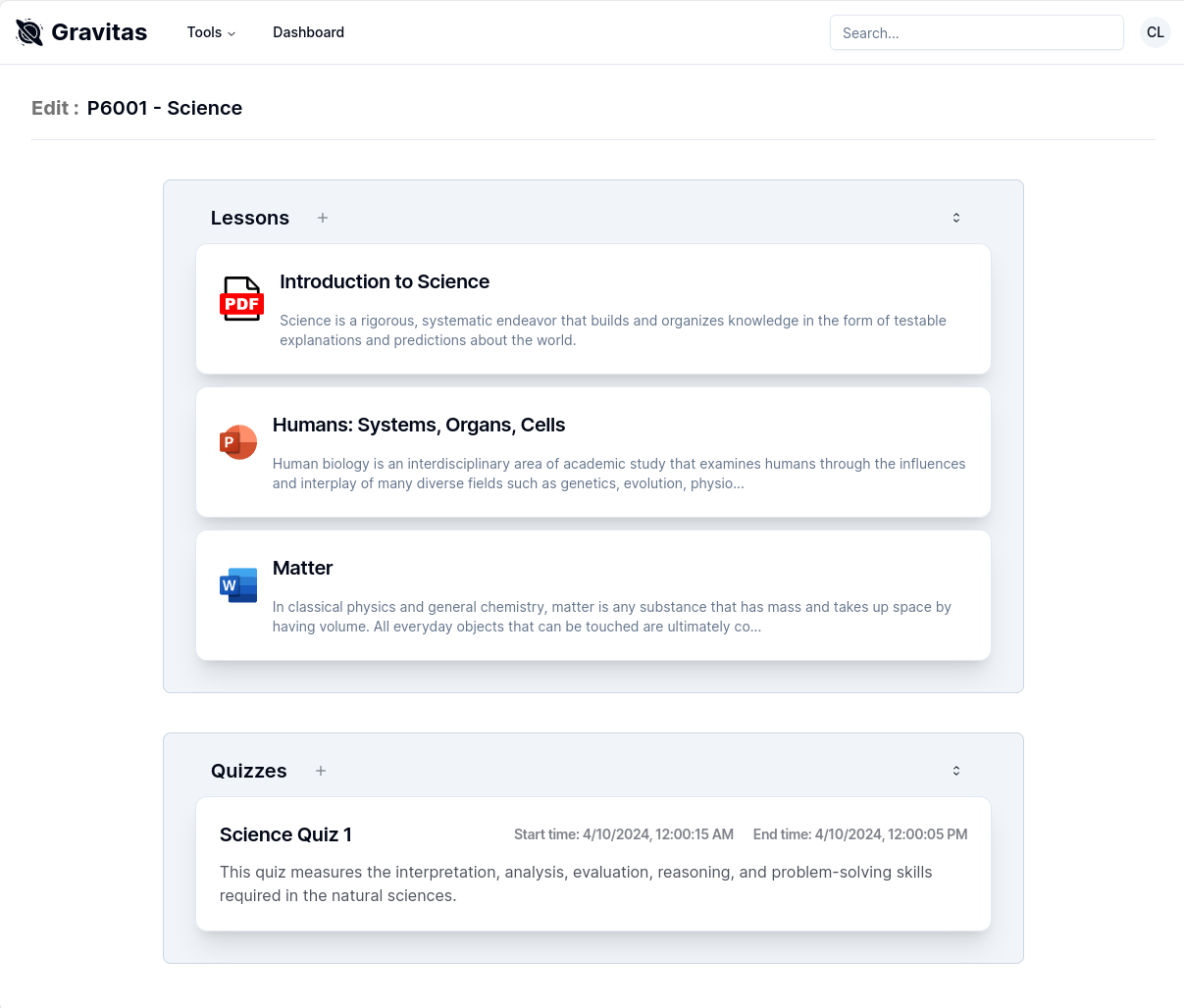

- Module and enrollment management: teachers create modules with a code, enroll class cohorts, upload lesson resources (PDF, PPTX) to S3 via Supabase Storage, and organize lessons with explicit display ordering.

- Quiz authoring and submission: teachers define questions with reference answers and weightages; students submit answers in a timed window; the interface prevents end-time selection before start-time, eliminating a class of input errors at the UI level rather than catching them server-side.

- Automated grading triggered on demand: the LMS fires the full grading pipeline: STS NLP model via Triton on Vast.ai, NER service on Google Cloud Run, and Llama 3 via Replicate; then bulk-upserts scores and feedback to the database when complete.

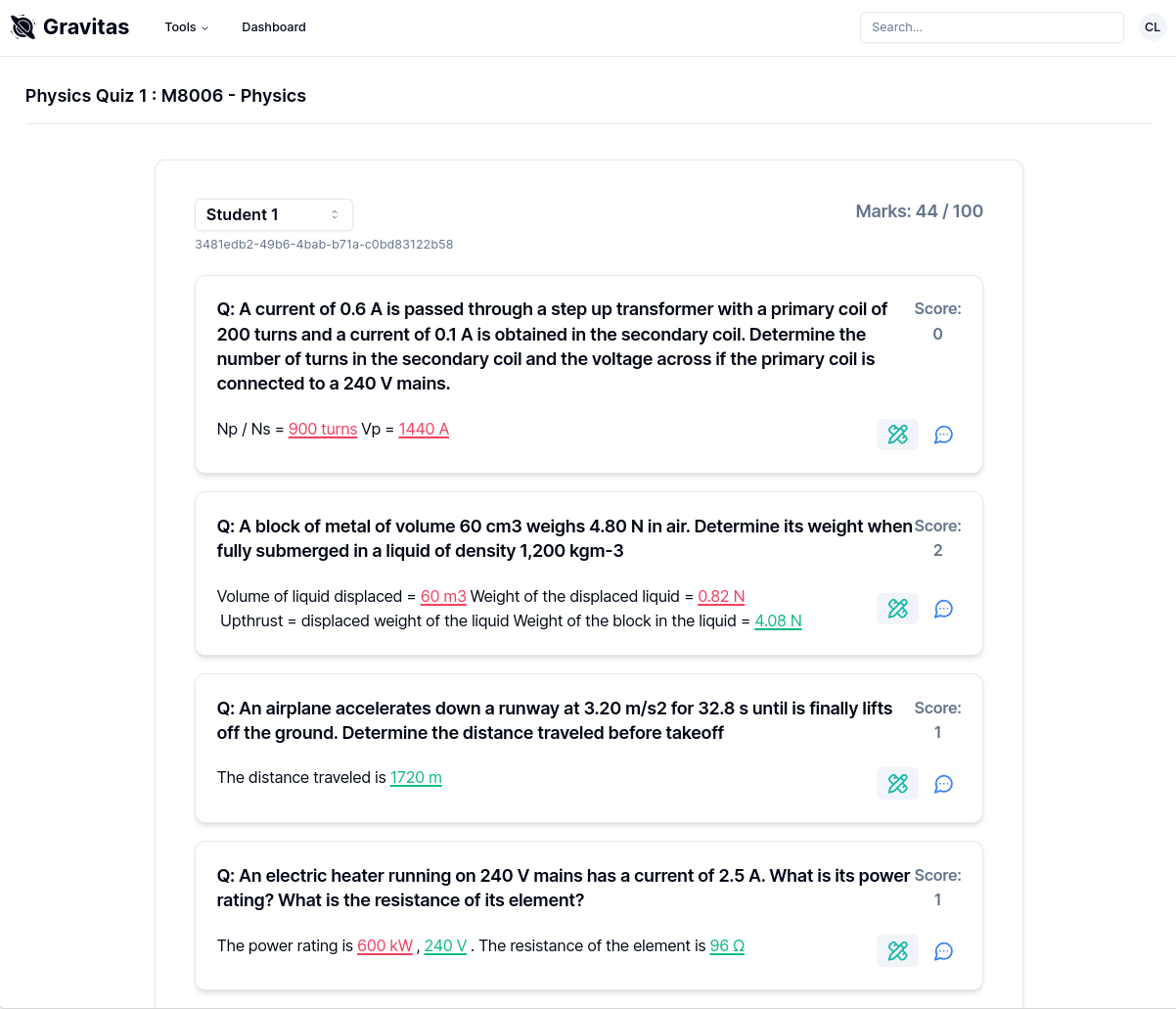

- Graded submission view with hover cards that reveal per-answer NER matches and unit comparisons inline, so teachers can see exactly why a score was assigned.

- Google Lighthouse scores of 97–100 for performance and 100 for best practices across all six tested pages, measured under throttled network conditions.

Stack choices

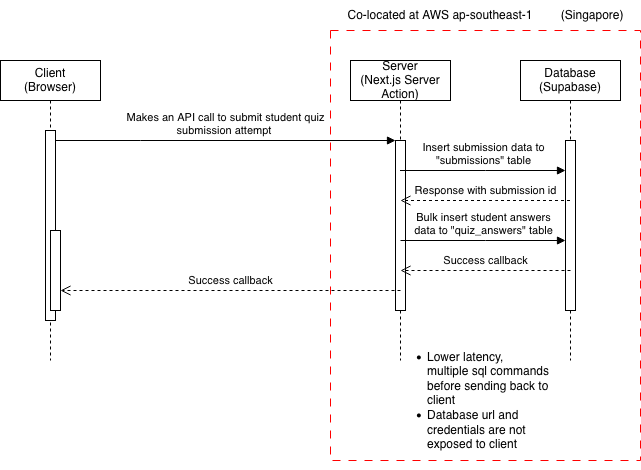

The frontend is Next.js using the App Router, with server components handling data fetching and server actions executing database writes. Keeping queries server-side has two effects: credentials never reach the browser, and the round-trip latency between the server and the Supabase PostgreSQL instance (co-located in AWS ap-southeast-1) is a fraction of what a client-side fetch would pay. The FCP is consistently 0.3 seconds across all pages as a direct result of this — server components mean the browser receives pre-rendered HTML rather than a blank shell waiting on JavaScript.

Styling uses Tailwind CSS and Shadcn UI. Shadcn was chosen over heavier component libraries because it ships source, not a black-box bundle: components live in the codebase and can be modified without fighting the library's opinions. It also follows WCAG accessibility standards out of the box and adds negligible weight to the bundle, which matters when the performance budget is tight.

Forms are handled by React Hook Form with Zod schemas for validation. Zod's TypeScript-first design means the same schema that validates at runtime also infers the TypeScript type used throughout the component, eliminating the double-declaration problem common in form-heavy apps. State management beyond local component state uses Zustand, which avoids prop drilling across the LMS's deep component tree without the boilerplate overhead of a full Redux setup.

The database is PostgreSQL on Supabase. The schema tracks members, permissions, classes,

enrollments, modules, lessons, assignments, quizzes, quiz questions, quiz answers, and

submissions. Relationships that are many-to-many (students to classes, students to modules) use

explicit junction tables rather than array columns, keeping join queries straightforward. Enum

types are used for completion status; the timestamptz type is used throughout for timestamps so

daylight savings does not silently corrupt scheduling data.

Authentication is delegated entirely to Supabase Auth. When a user registers, a database trigger populates the public members and permissions tables from the auth.users record. Every subsequent request carries a JWT, which Next.js middleware inspects before the route handler runs, redirecting unauthorized access before any data is touched. The JWT is stored in a browser cookie and refreshed automatically by the Supabase auth server on expiry.

Integration architecture

Each grading subsystem runs as an independent containerized service. The OMR processor and

the NER service are deployed to Google Cloud Run; the NLP models run on a Triton Inference

Server instance on Vast.ai; the LLM is hosted by Replicate. The LMS talks to all of them through

a FastAPI orchestration service also on Cloud Run, which receives a GET /grade/{quiz_id},

fetches the quiz submissions from Supabase, fans out calls to the three grading services, fuses

the scores, and bulk-upserts the results back to the database.

The NLP and NER calls are parallelized where possible. Student answers are batched into groups of eight for the Triton gRPC client. NER requests fire asynchronously to Cloud Run using OAuth 2.0 bearer tokens obtained from the Google auth library. LLM prompts are also async, grouped by question to minimize token count — one prompt per question covers all students' answers for that question simultaneously rather than sending one prompt per student per question, which would multiply the Replicate API cost linearly with class size.

Vercel's hobby tier imposes a 10-second serverless function timeout, which the grading pipeline

comfortably exceeds for any non-trivial class size. The workaround is a polling loop: the initial

request fires the grading service and returns immediately; a server component then polls the

database every 15 seconds for the graded boolean on the quiz row; once it flips, the component

fetches and renders the results. The client sees a loading state throughout. It is not elegant, but

it fits inside the platform constraints without requiring a paid tier or a separate WebSocket server.

Challenges

The 10-second Vercel timeout was the most disruptive platform constraint. The polling solution works but it means the grading result can appear up to 15 seconds after the pipeline finishes, which is visible latency. The right fix is either a paid hosting tier with longer execution limits or moving the result delivery to a WebSocket push, but both were outside the project budget.

Row-Level Security at the database layer required writing PostgreSQL policies for every table rather than relying solely on application-level checks. This was time-consuming but the payoff is that a bug in the Next.js authorization middleware cannot expose or mutate data it shouldn't; the database will reject the query regardless. For a system that holds student grades, that guarantee is worth the effort.

The scope expanded significantly mid-project. The original plan did not include a full LMS; the GUI was scoped as a lightweight interface for the grading system. The decision to build Gravitas to a production-credible standard; real auth, real schema design, real deployment; meant the NLP model development phase ran over its planned timeline, compounded by the Dual-Tasking Fusion model failing to converge and requiring a pivot to the Multi STS Ensemble approach.

Gravitas Home

Gravitas Home

Gravitas Grading UI

Gravitas Grading UI

Gravitas Module UI

Gravitas Module UI

Workaround for Vercel 10s Timeout

Workaround for Vercel 10s Timeout